Upload your consciousness into a computer with these 5 easy steps.

Will humans someday upload their consciousness into an electronic form, achieving immortality? Many people believe so, encouraged by a combination of advances in neuroscience, computing power and data storage. Perhaps the most famous proponent is Ray Kurzweil, who has estimated that this could happen in less than 40 years. We’ve come a long way from this being grist for science fiction to a discussion about endpoints and necessary technical requirements that are based (more or less) in the real world. Even though the idea is still out of reach and great skepticism is warranted, it’s possible to consider whether uploading your brain is achievable, what barriers are remaining to surmount and whether the promise ultimately matches the hype.

Step 1: Use the Right Hardware. Or Not.

Uploading a brain will require more than your laptop. There are estimates for the storage capacity needed to hold the contents of a human brain that range from 100-2,500 terabytes, depending on hazy estimates of what it takes to store a memory, but let’s assume it’s a large number. The computer needed to hold a human brain and simulate its functions might be either engineered - or potentially - grown.

Neuromorphic chips are the most promising platform for containing brains, because they allow computations that mimic those of the brain, and we have systems that can interface with them. The Human Brain Project has a neuromorphic platform subproject, and the amount of work put into in silico mimicry of biologic neurons is perhaps the most promising route toward a neurocomputing platform that is modular, expandable, and plastic.

Could we grow such a platform?

Step 1: Use the Right Hardware. Or Not.

Uploading a brain will require more than your laptop. There are estimates for the storage capacity needed to hold the contents of a human brain that range from 100-2,500 terabytes, depending on hazy estimates of what it takes to store a memory, but let’s assume it’s a large number. The computer needed to hold a human brain and simulate its functions might be either engineered - or potentially - grown.

Neuromorphic chips are the most promising platform for containing brains, because they allow computations that mimic those of the brain, and we have systems that can interface with them. The Human Brain Project has a neuromorphic platform subproject, and the amount of work put into in silico mimicry of biologic neurons is perhaps the most promising route toward a neurocomputing platform that is modular, expandable, and plastic.

Could we grow such a platform?

What better repository for human consciousness could there be than a human brain – maybe even one cloned from your own brain? It’s possible to grow brain tissues called cerebral organoids from stem cells, producing cerebral cortical structures with mature cortical neuron subtypes. This technology is still in the very earliest days of development, so it’s not unreasonable to imagine improvements in this process, or similar processes, that might support a full connectome. From there, it would be a matter of the new brain imprinting the unique patterns of activity associated with neural processing (see steps 2-3). The main problem with this is that these biological solutions would need the same sorts of support systems of our own brain, while we might run our electronic brains on solar (or chlorophyll – maybe we could live a while as a tree!). Cloning brains invariably raises ethical questions about the nature of these brain-like organs. Predicting what would be the right platform for this is like rewinding time and predicting whether the Walkman (remember that?) or the iPod would succeed – the best we can do is set the conditions that would predict success with the understanding that new technological advances could blindside us and radically change how the problem is approached. Whatever platform is chosen will need to be capable of operating with the same basic rules as real brains, including a form of neuroplasticity, otherwise we’ll be missing an essential piece of “us”.

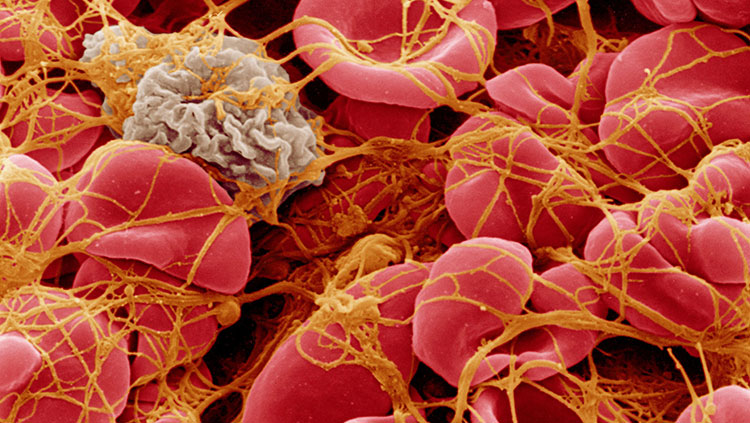

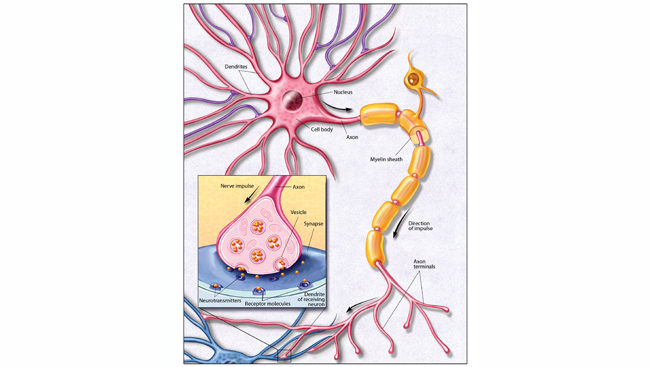

Step 2: Reconstruct the Connections. Some representation of the brain’s connections may be needed on which to “run” the brain, so we’d need a way to determine the relevant (or effective) synaptic connectome of the individual. Sebastian Seung, in his popular TED talk, describes the connectome as the bed of a stream that determines the flow of information throughout the brain, with the stream eroding the bed (the physical connections) and changing the flow during our lives. It’s a nifty metaphor for the plastic changes that continually rewire our brains. It may very well be possible to preserve a brain well enough to define this set of connections uniquely for a human brain in our own lifetimes. Does this challenge extend beyond the brain’s connections? I would argue that you are more than your connectome, and that ideas some have of brain complexity may not be quite complex enough. It’s unlikely that even the highest resolution image could be enough to capture the brain at work.

One reason is the complex nature of synapses, which are worlds unto themselves. Thousands of proteins populate synapses and modify how information is transmitted. If each of these synapses had only about 2 thousand proteins (in reality that is probably an underestimate), and each neuron received 10,000 synaptic contacts (a typically cited average value) across 89 billion of these neurons, the minimum number of states would be 1.8X1018 (that’s a lot, but it’s still likely to be much more than this – for example each protein can have multiple configurations, and might form protein complexes (e.g., polymers) that expand their function. As a result, synaptic terminals are extremely dynamic, functioning differently depending on their level and patterns of activity, and often accelerating or putting the brakes on transmitter release.

It’s a hum of activity occurring beneath and sometimes independently of the spiking activity of the nervous system. Some dismiss consideration of synapses as an unnecessary boring detail, boiling the function of synapses down to connection weights that release either excitatory or inhibitory brain chemicals. It’s a great simplifying assumption that lets computational scientists focus on the “other” complexity of connections across brain networks, but it just might short change this local synaptic complexity, and risks getting the flow of information in some circuits wrong. The map, after all, is not the territory. This leads to an additional step that would be needed to upload brains.

Step 3: Capture Your Brain Activity as a Dynamic System. Dynamic and highly nonlinear relationships can be detected in the electrical activity of the brain, but they are (so far) invisible even to high resolution electron microscopy. Just as taking a picture of a Ferrarri doesn’t provide information about what it would be like to punch the gas pedal, an anatomical image of a synapse would not necessarily capture cognition. Some neuroscientists hold that these nonlinear relationships are not computable, and they certainly aren’t with today’s computers. To do it at all, we’ll either need enormous improvements in computing speed and memory, or new ways of simplifying these computations to make them tractable without losing their essential information. Another problem is that a purely anatomical connectome would capture one moment of an individual’s life: the last one. In a dynamic system it might be nearly impossible to estimate the state of the network in enough detail to determine how the system should function when re-initialized. In viewing brain tissue prepared for electron microscopy, one feels the static nature of the images. Properly done, they appear to be permanent monuments of that particular preserved brain. But just as an insect in amber was a dynamic creature before being trapped, so too are brains. We know, based on more dynamic representations of ongoing brain processes, that these connections are not static.

What electron micrographs do is a bit like capturing a really good still from a movie frame. So in addition to a given brain’s wiring diagram, we need to capture the brain in action. We have limited ways of doing this at the resolution needed to understand what part your unique connectome would become active when you think of your lover, or Benjamin Franklin, or being chased by a bear – each of which would presumably ping your network differently. Current generation functional magnetic resonance imaging (fMRI) or magnetoencephalography (MEG) scanning methods are probably insufficient. Let’s assume improvements in current methods, or perhaps novel methods that capture brain activity at a relevant scale or scales. Once captured, a computational framework would need to be established to run the simulation.

Here, we might count on some efficiency, since it may be possible to shortcut some of the complex synaptic functions (not discarding them, simplifying them) in favor of computational approaches that achieve the same result – sort of the way digital compression is used for images, but in this case computational simplifications of the effects of ion channels on the membrane potential of neurons, on the release of neurotransmitter or on larger scale brain rhythms. Discussions of brain uploading have so far focused on our uniqueness. But another short cut would be focusing instead on the similarities between one brain and another, or parts of the same brain with each other, rather than the differences. An example is the modularity of the brain. Cortical modules are surprisingly similar, with inputs and rules of plasticity defining to a certain degree whether a particular patch of cortex is, for example, visual or tactile.

Such short cuts would allow more computational resources and memory allocation to be directed where it’s most needed. The brain evolved because it was a pretty good solution to the problem of living on earth. But that doesn’t mean that – like the design of a car or a line of code – the underlying engine could not be improved, achieving the same result with greater efficiency. This may also mean another simplifying assumption is possible - that we really don’t need each person’s wiring diagram. Maybe, all we really need is one really good wiring diagram. This “shell” might in turn be trained by the dynamic patterns of activity that uniquely “records” the personality onto this (recordable) wiring substrate – essentially carving out the river bed and defining the flow of information and thinking for the unique personality. Would measuring the brain in action be enough to capture the uniqueness of our consciousness? At least one more step is probably needed – one that is sometimes left out of discussions about uploading brains.

Step 4: Continuity of Consciousness. If we wish to actually realize that we made it across the digital divide of death, and are not left sitting on the other side of this gap as a befuddled clone with no sense of who we are, consciousness must not only be replicated, but a continuity of consciousness should be a desired result. Some think that this isn’t a problem. After all, it’s reasoned, when you go to sleep and awaken you are still you. People in comas still remember who they are when they awaken. Frozen wood frogs that hibernate through the winter at subzero temperatures presumably don’t forget they are frogs. Progress is being made on freezing and thawing worms in such a way that at least some types of memories may survive the trip. Is the process of death, preservation, uploading to a computer and reanimation anything but an example of these kind of changes?

It’s clear there are limits to this idea - a clone doesn’t seem to have the same experience of the original (in part because there’s a different nurture applied to the nature), and as close as twins may be, they don’t typically believe themselves to be each other. Presumably, your wiring diagram in combination with the dynamics of activity that define “you” would find it to be a bumpy ride into a new electronic brain, perhaps more so than a revived, once-vitrified human. That frozen worm may learn, but how can we be sure that the worm is experiencing true “worminess” if it's not even in the original worm body? Achieving this continuity may be a more complex and messy problem than transhumanists may realize, but we already may be achieving some proof in concept in current scientific advances. Prosthetics are being developed that incorporate a sense of touch, which would presumably be important in incorporating the prosthetic into the body’s image.

Conversely, the mind can be tricked into displacing the consciousness of body sense, even into fake repositories like a fake hand. Efforts like these suggest that the brain can adapt to, and accommodate external technologies. Counting on this plasticity does mean that strategies that rely on it would need to be deployed before death, to provide an opportunity to incorporate something like, for example, a brain chip. Considered in this context, the technologies necessary for transhumanism are of the same sort that would be necessary to make a neural prosthesis to compensate for a traumatic brain injury, or memory loss. The best we can draw from current ideas is that they rely on methods that would probably, at best, make a new copy of what would be an independent conscious agent, even if they operated flawlessly. Step 5: Deal with it. Is the vision of an immortal afterlife where we can expand and hack our own consciousness achievable?

While I retain a healthy skepticism, I wouldn’t bet against it. Should the tiny chance that it or similar ideas would work impact one’s current quality of life? I can’t answer that. The challenges remaining are definable and therefore in principle might be solvable. But they will require that we understand much more about the brain at all of its levels of operation, not just computationally convenient ones. Grounding this in reality while avoiding hucksterism or crackpottery requires a deeper consideration of what would be produced if it all ended up working as advertised, both to the individual and to the human species. Would a simulated intelligence always be better than the natural one? We assume the answer is “yes”, because we can imagine near infinite expansion of intelligence and memory once we open the can in which we store our organic intelligence. There is also a tacit assumption that more intelligence is “better” for quality of life. But there may be a danger with a human-constructed platform that we inadvertently hard wire a certain type of intelligence by virtue of its design.

In essence, might we lose the capacity for generating different types of intelligence that may be necessary to deal with novel threats? Would engineering a brain lead to a homogenized way of thinking? We’ll need to work on that. Would this consciousness be “embodied” in a physical body (whether robot or clone), or would we be satisfied as a resident of a complex simulation that produces the sensation of conscious experience, as in The Matrix? Or - just perhaps - we already live as a simulation of a past, computed in some far-flung future in which the singularity has already happened, and we just don’t realize it.